Thinking

Everything that’s new and fresh

Our Latest

How climate change is impacting homeowners and what insurance can do about it

by Cake & Arrow

Customer Experience

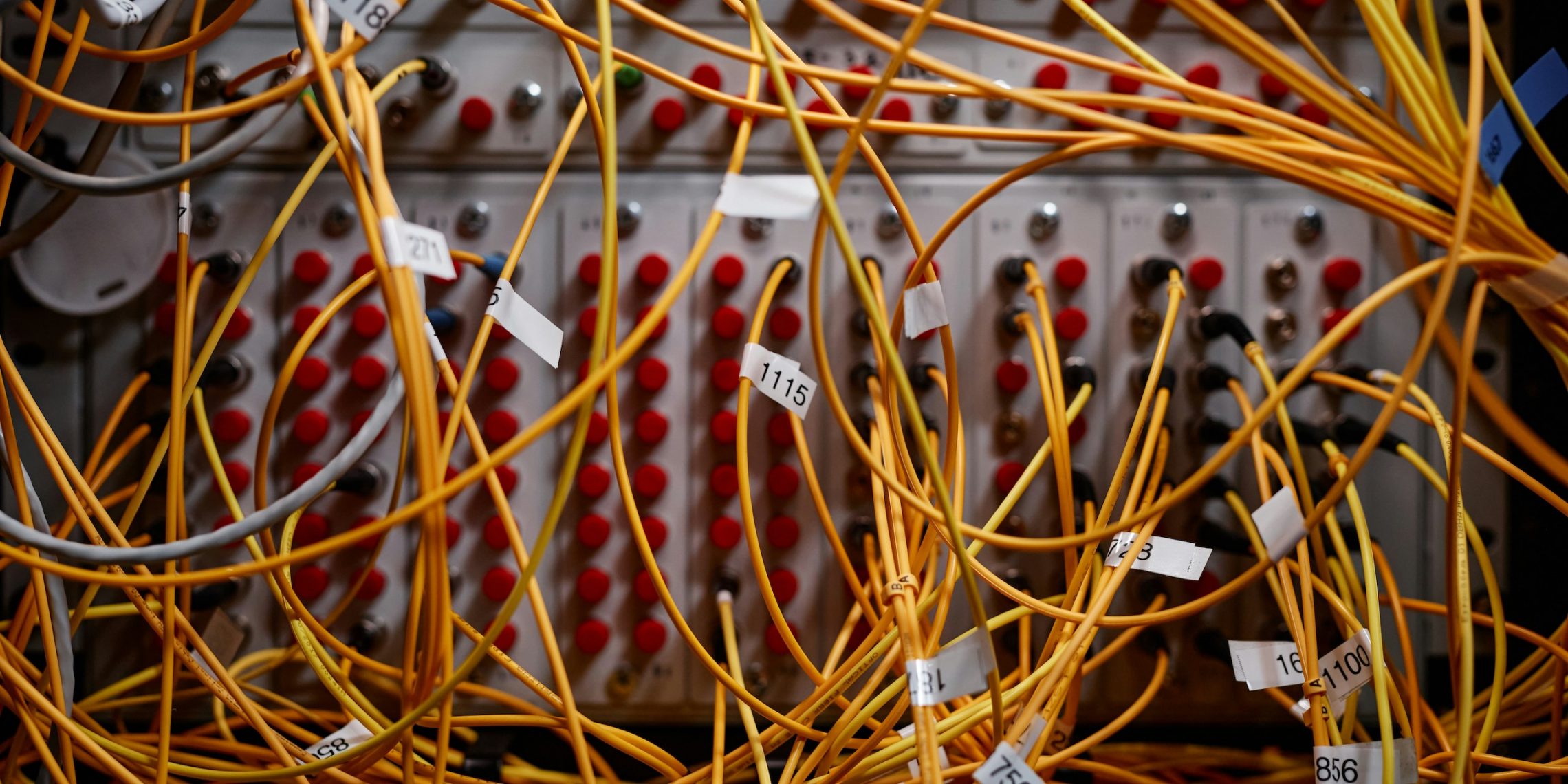

Don’t let legacy systems keep you from modernizing your customer experience

Strategies for improving your customer experience that bypass your legacy systems

Customer Experience

Building confidence in your digital products

How following a CX-driven product development process can help you fail smarter, move faster, and build digital products with confidence.

(Articles only appear in the frontend.)